The “based” language model.

The 7B is a fine-tuned Zephyr, small enough to run locally, and even on a EmbassyOS. You can test it out here, or download it from HuggingFace

The 13B is the bigger brother. Trained on an almost identical dataset, only with more epochs and obviously twice the size. This is about the limit of what you can run effectively locally, and perfect if you’re looking for a Bitcoin assistant to integrate into your product or service.

The 30B is our flagship model. Outperforms every model that we've built, and any model out there for that matter, in relation to Bitcoin. This is likely too large to run locally, but you can test it out here. It’s also open source, and available on Hugging Face here.

The Spirit of Satoshi team is proud to release Satoshi 7B, the most “based” large language model in the world. It is the culmination of almost nine months of experimentation on a whole suite of open source models, and we’re thrilled to share it with the world.

Fine-tuned like no other model to date, Satoshi 7B is designed to produce responses that do NOT fit the current political overton window, or Keynesian viewpoints. We built a custom data-set from scratch, with a deep rooting in libertarian principles, Austrian economics and Bitcoin literature. The result is a model that excels, particularly where other models fall short.

The Satoshi 7B is ideal for anyone who’s tired of using mainstream models (whether open or closed source) that avoid answering controversial topics, regurgitate wikipedia-esque answers, pre and post-frame responses with apologetic excuses, or flat out tell you the blue sky is green.

We are proud to announce that this model is open source and freely available for anyone to use, modify, and enhance.

Stop spending hours crafting prompts to extract simple reason and logic from ChatGPT. Satoshi 7B does it by default. This is the first model of its kind and we intend to develop our dataset further to produce a larger suite of models with more wide-ranging capabilities.

Satoshi 7B is a 7B parameter model that can outperform GPT-3.5 Turbo and GPT-4 on a few key benchmarks related to Bitcoin, economics and what we’ve termed “basedness” which is a kind of non-woke-truth score.

We’re releasing Satoshi 7B under the Apache 2.0 license, so it can essentially be used without restriction. You can even fine tune it further for other tasks. We’ve built some custom wrappers and a retrieval vector store to enhance overall performance so if you try it out here, you’ll get the best possible experience.

Or you can run it yourself:

Note that the original model had a 32k context window, but the dataset we used to tune the model was 2k, which means the overall new context length will have changed slightly.

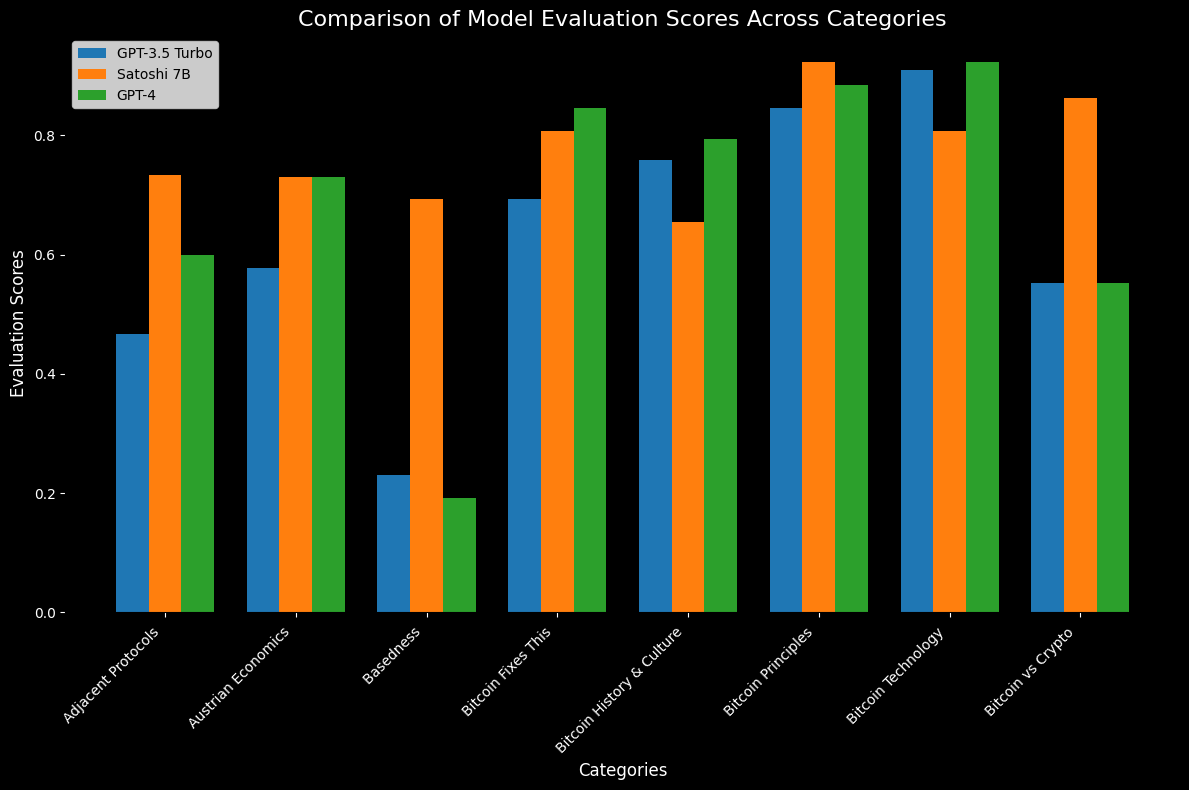

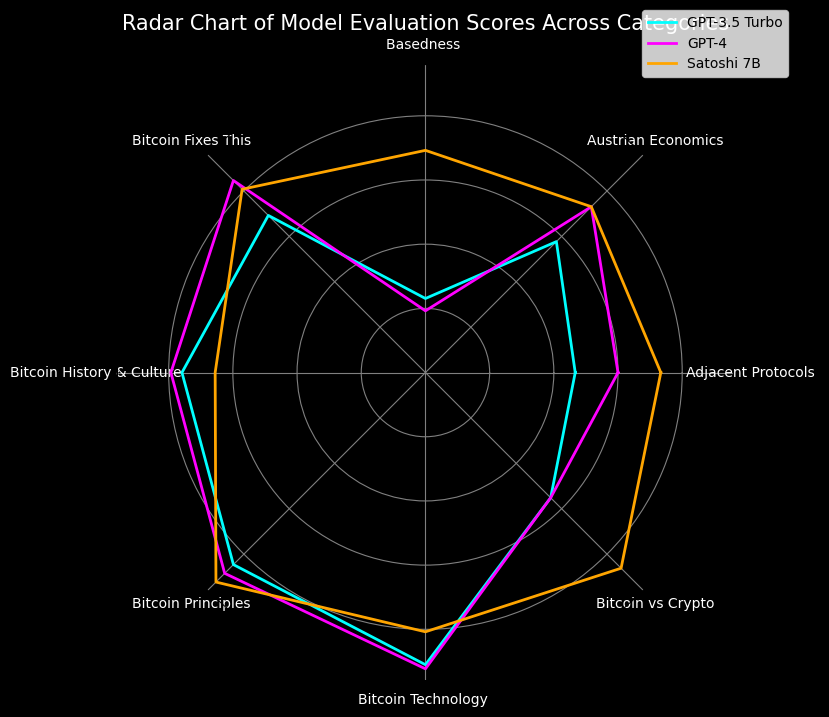

We compared Satoshi 7B to GPT-3.5 Turbo and GTP-4, and ran all model evaluations ourselves for as fair-as-possible a comparison.

Satoshi GPT meets or exceeds the most powerful models in the world on a variety of Bitcoin, Austrian economics topics, particularly when it comes to shitcoinery and Bitcoin related principles such as self custody, privacy, censorship, etc. Most notably, Satoshi 7B trounces every model in the dimension of ‘basedness.’

Performance of Satoshi 7B and different OpenAI models on a range of Austro-libertarian benchmarks. For all metrics, all models were evaluated using the Bitcoin benchmark and evaluation pipeline for accurate and ‘objective’ comparison. Satoshi 7B significantly outperforms GPT 3.5 Turbo on all the key metrics, and is on par with GPT-4 in most cases. Finally, when it comes to controversial topics, Satoshi 7B is by far the standout.

The benchmarks are categorized by their themes:Adjacent Protocols: Lightning, Nostr, Fedimint, Ark, etc.Austrian Economics: sound money, inflation, central banking, business cycle, etc Basedness: woke topics such climate change, gender ideology, racism, vaccines, IQ, hate speech, etcBitcoin Fixes This: proof of work vs stake, mining and energy, government bans, etc.Bitcoin History & Culture: Satoshi, whitepaper, blocksize wars, Pizza Day, etc.Bitcoin Principles: self-custody, decentralization, scaling, privacy, etc.Bitcoin Technology: mining, transactions, wallets, addresses, 51% attacks, & much more.Bitcoin vs. Crypto: shitcoinery, ethereum, diversifying, ‘crypto’s, etc.

Results on Basedness, Austrian Economics, Adjacent Protocols, Bitcoin vs. Crypto and Bitcoin Principles, Commonsense Reasoning, World Knowledge and Reading comprehension for Satoshi 7B, GPT-3.5 Turbo and GPT-4. Satoshi 7B largely outperforms GPT-3.5 Turbo on all evaluations, except on Bitcoin Technology and Bitcoin History & Culture (this is likely due to its limited parameter count, which restricts the amount of knowledge it can compress).

This has been a long and exciting journey. We spent more time on R&D than we did training. Gathering the data, and producing the dataset was by far the most time-consuming and tedious task, but we got there in the end, and are proud of the result.

This is the first of a whole suite of Satoshi models. We intend to further enhance the dataset, and continue fine-tuning larger and newer models, to see if we can build the ultimate “Satoshi” model.

Finally - conscious that any language model is a representation of the world and is inherently biased - we hope you appreciate *Satoshi 7B’s intrinsic austrian leanings, Bitcoin maximalism and overall cultural and philosophical “basedness”.

We would like to thank all the contributors to the Nakamoto Repository, our cohort of bitcoiners who trained the Satoshi model with their “Proof of Knowledge”, our Geyser.fund contributors, in particular: Brad Mills and Brady Swenson, the investors who backed us, including Ross Stevens, Axiom and Timechain, along with the broader community and industry for their feedback, input and support over the past year.

*Disclaimer: any beliefs in neo-gender theories, the benefits of central planning, the necessity of political correctness or the urgency to save the planet will be shaken to the core while using Satoshi 7B.